| Line 127: | Line 127: | ||

*2-D case | *2-D case | ||

| + | <center> | ||

| + | [[Image:2d.png]] | ||

| + | </center> | ||

---- | ---- | ||

Revision as of 16:04, 30 April 2014

Bayes rule in practice: definition and parameter estimation

A slecture by ECE student Chuohao Tang

Partly based on the ECE662 Spring 2014 lecture material of Prof. Mireille Boutin.

Content:

1 Bayes rule for Gaussian data

Assume we have two classes $ c_1, c_2 $, and we want to classify the D-dimensional data $ \left\{{\mathbf{x}}\right\} $ into these two classes. To do so, we investigate the posterior probabilities of the two classes given the data, which are given by $ p(c_i|\mathbf{x}) $, where $ i=1,2 $ for a two-class classification. Using Bayes' theorem, these probabilities can be expressed in the form

where $ P(c_i) $ is the prior probability for class $ c_i $.

We will classify the data to $ c_1 $ if

and vise versa. Here $ g({\mathbf{x}}) $ is the discriminant function.

For a D-dimensional vector $ \mathbf{x} $, the multivariate Gaussian distribution takes the form

where $ \mathbf{\mu} $ is a D-dimensional mean vector, $ \mathbf{\Sigma} $ is a D×D covariance matrix, and $ |\mathbf{\Sigma}| $ denotes the determinant of $ \mathbf{\Sigma} $.

Then the discriminant function will be

So if $ g({\mathbf{x}})\geq 0 $, decide $ c_1 $; If $ g({\mathbf{x}}) < 0 $, decide $ c_2 $.

For k-classes classification problem, we decide the data belongs to $ c_i $, where $ i=1,...,k $ if

2 Procedure

- Obtain training and testing data

We divide the sample data into training set and testing set. Training data is used to estimate the model parameters, and testing data is used to evaluate the accuracy of the classifier.

- Fit a Gaussian model to each class

- Parameter estimation for mean,variance

- Estimate class priors

We will discuss details to estimate these parameters in the following section.

- Calculate and decide

After obtaining the estimated parameters, we can calculate and decide which class a testing sample belongs to using Bayes rule.

$ \begin{align}if \ p({\mathbf{x}}|c_1)P(c_1)>p({\mathbf{x}}|c_2)P(c_2)\ decide\ c_1\\ if \ p({\mathbf{x}}|c_1)P(c_1)<p({\mathbf{x}}|c_2)P(c_2)\ decide\ c_2 \end{align} $

3 Parameter estimation

Given a data set $ \mathbf{X}=(\mathbf{x}_1,...,\mathbf{x}_N)^T $ in which the observations $ \{{\mathbf{x}_n}\} $ are assumed to be drawn independently from a multivariate Gaussian distribution (D dimension), we can estimate the parameters of the distribution by maximum likelihood. The log likelihood function is given by

By simple rearrangement, we see that the likelihood function depends on the data set only through the two quantities

These are the sufficient statistics for the Gaussian distribution. The derivative of the log likelihood with respect to $ \mathbf{\mu} $ is

and setting this derivative to zero, we obtain the solution for the maximum likelihood estimate of the mean

Use similar method by setting the derivative of the log likelihood with respect to $ \mathbf{\Sigma} $ to zero, we obtain and setting this derivative to zero, we obtain the solution for the maximum likelihood estimate of the mean

To estimate the class priors, we will count how much data are there for each class, so

4 Example

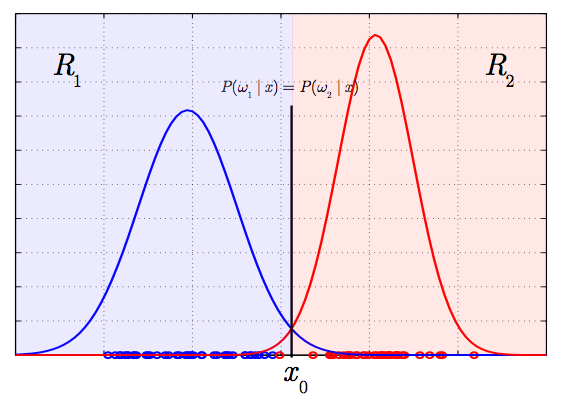

- 1-D case

- 2-D case

5 Conclusion

Questions and comments

If you have any questions, comments, etc. please post them on this page.