Back to all ECE 600 notes

Previous Topic: Random Variables: Distributions

Next Topic: Functions of a Random Variable

The Comer Lectures on Random Variables and Signals

Topic 7: Random Variables: Conditional Distributions

We will now learn how to represent conditional probabilities using the cdf/pdf/pmf. This will provide us some of the most powerful tools for working with random variables: the conditional pdf and conditional pmf.

Recall that

∀ A,B ∈ F with P(B) > 0.

We will consider this conditional probability when A = {X≤x} for a continuous random variable or A = {X=x} for a discrete random variable.

Discrete X

If P(B)>0, then let

∀x ∈ R, for a given B ∈ F.

The function p$ _X $ is the conditional pmf of X. Recall Bayes' theorem and the Total Probability Law:

and

if $ A_1,...,A_n $ form a partition of S and $ P(A_i)>0 $ ∀i.

In the case A = {X=x}, we get

where p$ _X $(x|B) is the conditional pmf of X given B and $ p_X(x) $ is the pmf of X. Note that Bayes' Theorem in this context requires not only that P(B) >0 but also that P(X = x) > 0.

We also can use the TPL to get

Continuous X

Let A = {X≤x}. Then if P(B)>0, B ∈ F, define

as the conditional cdf of X given B.

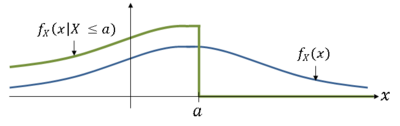

The conditional pdf of X given B is then

Note that B may be an event involving X.

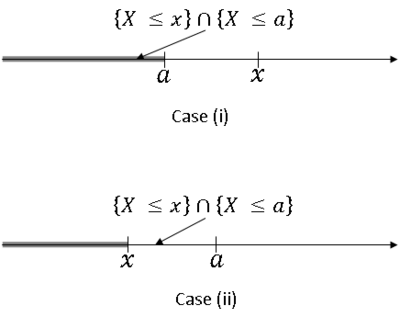

Example: let B = {X ≤ a} for some a ∈ R. Then

Two cases:

- Case (i): $ x > a $

- Case (ii): $ x < a $

Now,

Bayes' Theorem for continuous X:

We can easily see that

from previous version of Bayes' Theorem, and that

if $ A_1,...,A_n $ form a partition of S and P($ A_i $) > 0 ∀$ i $, from TPL.

but what we often want to know is a probability of the type P(A|X=x) for some A∈F. We could define this as

but the right hand side (rhs) would be 0/0 since X is continuous.

Instead, we will use the following definition in this case:

using our standard definition of conditional probability for the rhs. This leads to the following derivation:

So,

This is how Bayes' Theorem is normally stated for a continuous random variable X and an event A∈F with P(A) > 0.

We will revisit Bayes' Theorem one more time when we discuss conditional distributions for two random variables.

References

- M. Comer. ECE 600. Class Lecture. Random Variables and Signals. Faculty of Electrical Engineering, Purdue University. Fall 2013.

Questions and comments

If you have any questions, comments, etc. please post them on this page

Back to all ECE 600 notes

Previous Topic: Random Variables: Distributions

Next Topic: Functions of a Random Variable