Back to all ECE 600 notes

Previous Topic: Conditional Distributions

Next Topic: Expectation

The Comer Lectures on Random Variables and Signals

Topic 8: Functions of Random Variables

We often do not work with the random variable we observe directly, but with some function of that random variable. So, instead of working with a random variable X, we might instead have some random variable Y=g(X) for some function g:R → R.

In this case, we might model Y directly to get f$ _Y $(y), especially if we do not know g. Or we might have a model for X and find f$ _Y $(y) (or p$ _Y $(y)) as a function of f$ _X $ (or p$ _X $ and g.

We will discuss the latter approach here.

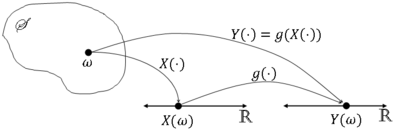

More formally, let X be a random variable on (S,F,P) and consider a mapping g:R → R. Then let Y$ (\omega)= $g(X($ \omega)) $ ∀$ \omega $ ∈ S.

We normally write this as Y=g(X).

Graphically,

Is Y a random variable? We must have Y$ ^{-1} $(A) ≡ {$ \omega $ ∈ S: Y$ (\omega) $ ∈ A} = {$ \omega $ ∈ S: g(X$ (\omega) $) ∈ A} be an element of F ∀A ∈ B(R) (Y must be Borel measurable).

We will only consider functions g in this class for which Y$ ^{-1} $(A) ∈ F ∀A ∈ B(R), so that if Y=g(X) for some random variable X, Y will be a random variable.

What is the distribution of Y? Consider 3 cases:

- X discrete, Y discrete

- X continuous, Y discrete

- X continuous, Y continuous

Note: you cannot have a continuous Y from a discrete X.

Contents

[hide]Case 1: X and Y Discrete

Let $ R_X $ ≡ X(S) be the range space of X and $ R_Y $ ≡ g(X(S)) be the range space of Y (i.e. the image of X(S) under g). Then the pmf of Y is

But this means that

Example $ \quad $ Let X be the value rolled on a die and

Then R$ _X $ = {0,1,2,3,4,5,6} and R$ _Y $ = {0,1} and g(x) = x % 2.

Now

Case 2: X Continuous, Y Discrete

The pmf of Y in this case is

i.e. for a given y ∈ R$ _y $, D$ _y $ is the set of all x ∈ R such that g(x) = y.

Then,

Example Let g(x) = u(x - x$ _0 $) for some x$ _0 $ ∈ R, and let Y=g(X). Then $ R_Y $ = {0,1} and

So,

Case 3: X and Y Continuous

We will discuss 2 methods for finding f$ _Y $ in this case.

Approach 1

First, find the cdf F$ _Y $.

where D$ _y $ = {x ∈ R: g(x) ≤ y}.

i.e. for a given y ∈ R, D$ _y $ is the set of all x ∈ R such that g(x) ≤ y.

Then

Differentiate F$ _Y $ to get f$ _y $.

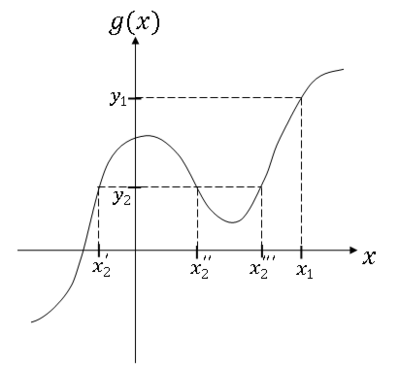

You can find D$ _Y $ graphically or analytically

Example

For y = y$ _1 $ and y = y$ _2 $,

Then

Example Y = aX + b, a,b ∈ R, a ≠ 0

So,

Then

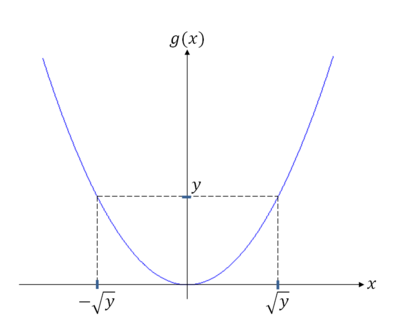

Example Y = X$ ^2 $

For y < 0, D$ _y $ = ø

For y ≥ 0,

So,

and

For general y, we need to find subsets of the y-axis that have solutions of the same form and solve the problems separately for the different subsets.

Approach 2

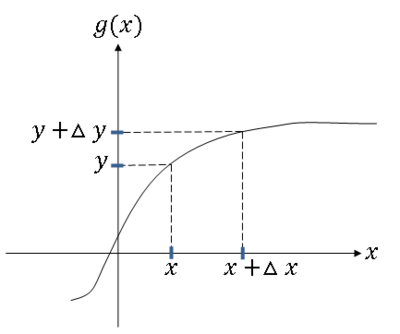

Use a formula for f$ _y $ in terms of f$ _X $. To derive the formula, assume the inverse function g$ ^{-1} $ exists, so if y = g(x), then x = g$ ^{-1} $(y). Also assume g and g$ ^{-1} $ are differentiable. Then, if Y = g(X), we have that

Proof:

First consider g monotone (strictly monotone) increasing (note that for differentiable and hence continuous functions defined for a given interval, injection implies monotonicity, hence it is sufficient to limit our analysis to monotonic functions only).

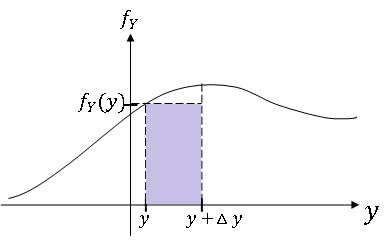

Since {y < Y ≤ y + Δy} = {x < X ≤ x + Δx}, we have that P(y < Y ≤ y + Δy) = P(x < X ≤ x + Δx).

Use the following approximations:

- P(y < Y ≤ y + Δy) ≈ f$ _Y $(y)Δy

- P(x < X ≤ x + Δx) ≈ f$ _X $(x)Δx

Since the left hand sides are equal,

Now as Δy → 0, we also have that Δx → 0 since g is continuous, and the approximations above become equalities. We rename Δy, Δx as dy and dx respectively, so letting Δy → 0, we get

We normally write this as

A similar derivation for g monotone decreasing gives us the general result for invertible g:

Note this result can be extended to the case where y = g(x) has n solutions x$ _1 $,...,x$ _n $, in which case,

For example, if Y = X$ ^2 $,

References

- M. Comer. ECE 600. Class Lecture. Random Variables and Signals. Faculty of Electrical Engineering, Purdue University. Fall 2013.

Questions and comments

If you have any questions, comments, etc. please post them on this page

Back to all ECE 600 notes

Previous Topic: Conditional Distributions

Next Topic: Expectation