Bayes Error for Minimizing Risk

Contents

Introduction

In class we discussed Bayes rule for minimizing the probability of error. Our goal is to generalize this rule to minimize risk instead of probability of error. For simplicity we deal with the two class case. Then we provide examples for the cases of 1D and 2D features, and we derive Bayes rule for minimizing risk in these cases.

We briefly motivate this topic by considering the following scenario. Suppose that a doctor has to classify a tumor as cancerous or benign. We consider the cost of misclassifying the tumor as benign to be high, since we would like the patient to be treated quickly if cancer is present. Therefore, we would assign a high cost to misclassifying the tumor as benign. We shall show how to incorporate this cost into Bayes rule in this article.

Bayes rule for minimizing risk

Let $ x \in \mathbb R^n $ be a feature vector. Let $ q_i(x) $ be the posterior probability of class $ i $ (denoted as $ \omega_i $) given $ x $, and let $ P_i $ be the prior probability for $ omega_i $. Let $ p_i(x) $ be the class conditional density for class $ i $. Denote $ c_{ij} $ as the cost of deciding $ x \in \omega_i $ with $ \omega_j $ as the true class. The conditional cost of assigning $ x \in \omega_i $ given $ x $ is

$ r_i(x) = c_{i1} q_1(x) + c_{i2} q_2(x) $

where $ i = 1, 2 $.

We assign $ x $ so that cost is minimum:

$ r_1(x) \lessgtr^{\omega_1}_{\omega_2} r_2(x) $

which says to decide $ \omega_1 $ if $ r_1(x) < r_2(x) $ and $ \omega_2 $ otherwise. If we make the decision this way, the total cost becomes

$ \begin{split} E[r(x)] &= \int \text{min}[ r_1(x), r_2(x) ] p(x) dx \\ &= \int \text{min} [c_{11} q_1(x) + c_{12} q_2(x), c_{21} q_1(x) + c_{22} q_2(x)] p(x) dx \\ &= \int \text{min} [c_{11} P_1 p_1(x) + c_{12} P_2 p_2(x), c_{21} P_1 p_1(x) + c_{22} P_2 p_2(x) ] dx \\ &= \int_{R_1} c_{11} P_1 p_1(x) + c_{12} P_2 p_2(x) dx + \int_{R_2} c_{21} P_1 p_1(x) + c_{22} P_2 p_2(x) dx \\ &= (c_{21} P_1 + c_{22} P_2) + \int_{R_1} (c_{11} - c_{21}) P_1 p_1(x) + (c_{12} - c_{22}) P_2 p_2(x) dx \end{split} $

</center>

Example 1: 1D features

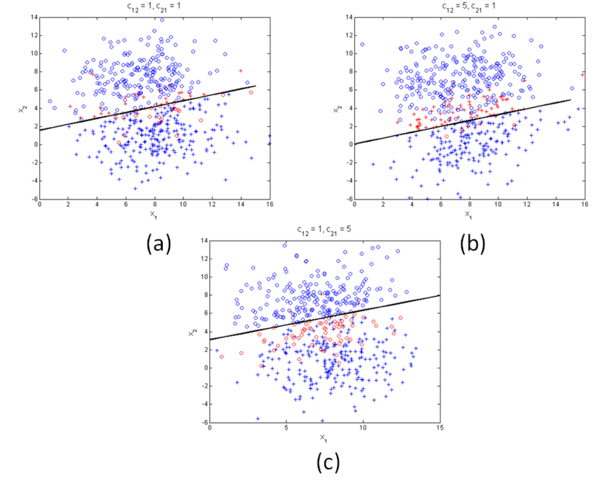

Example 2: 2D features

Summary and Conclusions

In this lecture we have shown that the probability of error ($Prob \left[ Error \right] $) when using Bayes error, is upper bounded by the Chernoff Bound. Therefore,

for $ \beta \in \left[ 0, 1 \right] $.

When $ \beta =\frac{1}{2} $ then $ \varepsilon_{\frac{1}{2}} $ in known as the Bhattacharyya bound.

References

[1]. Duda, Richard O. and Hart, Peter E. and Stork, David G., "Pattern Classication (2nd Edition)," Wiley-Interscience, 2000.

[2]. Mireille Boutin, "ECE662: Statistical Pattern Recognition and Decision Making Processes," Purdue University, Spring 2014.

Questions and comments

If you have any questions, comments, etc. please post them On this page.