| (22 intermediate revisions by 6 users not shown) | |||

| Line 1: | Line 1: | ||

| + | =Lecture 21, [[ECE662]]: Decision Theory= | ||

| + | |||

| + | Lecture notes for [[ECE662:BoutinSpring08_Old_Kiwi|ECE662 Spring 2008]], Prof. [[user:mboutin|Boutin]]. | ||

| + | |||

| + | Other lectures: [[Lecture 1 - Introduction_Old Kiwi|1]], | ||

| + | [[Lecture 2 - Decision Hypersurfaces_Old Kiwi|2]], | ||

| + | [[Lecture 3 - Bayes classification_Old Kiwi|3]], | ||

| + | [[Lecture 4 - Bayes Classification_Old Kiwi|4]], | ||

| + | [[Lecture 5 - Discriminant Functions_Old Kiwi|5]], | ||

| + | [[Lecture 6 - Discriminant Functions_Old Kiwi|6]], | ||

| + | [[Lecture 7 - MLE and BPE_Old Kiwi|7]], | ||

| + | [[Lecture 8 - MLE, BPE and Linear Discriminant Functions_Old Kiwi|8]], | ||

| + | [[Lecture 9 - Linear Discriminant Functions_Old Kiwi|9]], | ||

| + | [[Lecture 10 - Batch Perceptron and Fisher Linear Discriminant_Old Kiwi|10]], | ||

| + | [[Lecture 11 - Fischer's Linear Discriminant again_Old Kiwi|11]], | ||

| + | [[Lecture 12 - Support Vector Machine and Quadratic Optimization Problem_Old Kiwi|12]], | ||

| + | [[Lecture 13 - Kernel function for SVMs and ANNs introduction_Old Kiwi|13]], | ||

| + | [[Lecture 14 - ANNs, Non-parametric Density Estimation (Parzen Window)_Old Kiwi|14]], | ||

| + | [[Lecture 15 - Parzen Window Method_Old Kiwi|15]], | ||

| + | [[Lecture 16 - Parzen Window Method and K-nearest Neighbor Density Estimate_Old Kiwi|16]], | ||

| + | [[Lecture 17 - Nearest Neighbors Clarification Rule and Metrics_Old Kiwi|17]], | ||

| + | [[Lecture 18 - Nearest Neighbors Clarification Rule and Metrics(Continued)_Old Kiwi|18]], | ||

| + | [[Lecture 19 - Nearest Neighbor Error Rates_Old Kiwi|19]], | ||

| + | [[Lecture 20 - Density Estimation using Series Expansion and Decision Trees_Old Kiwi|20]], | ||

| + | [[Lecture 21 - Decision Trees(Continued)_Old Kiwi|21]], | ||

| + | [[Lecture 22 - Decision Trees and Clustering_Old Kiwi|22]], | ||

| + | [[Lecture 23 - Spanning Trees_Old Kiwi|23]], | ||

| + | [[Lecture 24 - Clustering and Hierarchical Clustering_Old Kiwi|24]], | ||

| + | [[Lecture 25 - Clustering Algorithms_Old Kiwi|25]], | ||

| + | [[Lecture 26 - Statistical Clustering Methods_Old Kiwi|26]], | ||

| + | [[Lecture 27 - Clustering by finding valleys of densities_Old Kiwi|27]], | ||

| + | [[Lecture 28 - Final lecture_Old Kiwi|28]], | ||

| + | ---- | ||

| + | ---- | ||

| + | |||

When the number of categories, c is big, decision tress are particularly good. | When the number of categories, c is big, decision tress are particularly good. | ||

| Line 5: | Line 40: | ||

One possible decision tree based on simple queries is the following: | One possible decision tree based on simple queries is the following: | ||

| − | + | [[Image:decision_tree_Old Kiwi.jpg]] | |

==Three crucial questions to answer== | ==Three crucial questions to answer== | ||

| + | |||

| + | How do you grow or construct a decision tree using training data? | ||

| + | |||

| + | CART Methodology - Classification and Regressive Tree | ||

For constructing a decision tree, for a given classification problem, we have to answer these three questions | For constructing a decision tree, for a given classification problem, we have to answer these three questions | ||

| − | 1) Which question | + | 1) Which question should be asked at a given node -"Query Selection" |

2) When should we stop asking questions and declare the node to be a leaf -"When should we stop splitting" | 2) When should we stop asking questions and declare the node to be a leaf -"When should we stop splitting" | ||

| Line 18: | Line 57: | ||

We shall discuss questions 1 and 2 (3 being very trivial) | We shall discuss questions 1 and 2 (3 being very trivial) | ||

| + | |||

| + | Need to define 'impurity' of a dataset such that <math>impurity = 0 </math> when all the training data belongs to one class. | ||

| + | |||

| + | Impurity is large when the training data contain equal percentages of each class | ||

| + | |||

| + | <math>P(\omega _i) = \frac{1}{C}</math>; for all <math>i</math> | ||

| + | |||

| + | Let <math> I </math> denote the impurity. Impurity can be defined in the following ways: | ||

| + | |||

| + | '''Entropy Impurity''': | ||

| + | |||

| + | <math>I = \sum_{j}P(\omega _j)\log_2P(\omega _j)</math>, when priors are known, else approximate <math>P(\omega _j)</math> by | ||

| + | <math>P(\omega _j) = \frac{\#\,of\,training\,patterns\,in\,\omega_j}{Total\,\#\,of\,training\,patterns}</math> | ||

| + | |||

| + | '''Gini Impurity''' | ||

| + | |||

| + | <math>I = \sum_{i\ne j}P(\omega _i)P(\omega _j) = \frac{1}{2}[1- \sum_{j}P^2(\omega _j)</math> | ||

| + | |||

| + | Ex: when C = 2, <math>I = P(\omega _1)P(\omega _2)</math> | ||

| + | |||

| + | '''Misclassification Impurity''' | ||

| + | |||

| + | <math>I = 1-max P(\omega _j)</math> | ||

| + | |||

| + | defined as the "minimum probability that a training pattern is misclassified" | ||

| + | |||

| + | The following figure shows above-mentioned impurity functions for a two-category case, as a function of the probability of one of the categories.(DHS-399p) | ||

| + | |||

| + | [[Image:impurity_Old Kiwi.jpg]] | ||

| + | |||

| + | Now let us look at each of the three questions in detail. | ||

| + | |||

| + | |||

| + | '''Query Selection''' | ||

| + | |||

| + | Heuristically, want impurity to decrease from one node to its children. | ||

| + | |||

| + | [[Image:node_Old Kiwi.jpg]] | ||

| + | |||

| + | We assume that several training patterns are available at node N and they have a good mix of all different classes. | ||

| + | |||

| + | I(N) := impurity at node N. | ||

| + | |||

| + | Define impurity drop at node N as: | ||

| + | <math>\triangle I=I(N)-P_{L}I(N_{L})-(1-P_{L})I(N_{R})</math> | ||

| + | |||

| + | where <math>P_{L}</math> and <math>(1-P_{L})</math> are estimated with training patterns at node N. | ||

| + | |||

| + | A query that miximizes <math>\triangle I</math> is "probably" a good one. But "finding the query that maximizes" is not a well defined question because we are not doing an exhaustive search over all the possible queries. Rather we narrow down to a set of few queries and find among them, which one maximizes <math>\triangle I</math>. | ||

| + | |||

| + | |||

| + | ''Example:'' | ||

| + | |||

| + | 1. look at separation hyperplane that miximizes <math>\triangle I(N)</math> | ||

| + | |||

| + | 2. look for a single feature threshold (colour or shape or taste) which would maximize <math>\triangle I(N)</math> | ||

| + | |||

| + | Query selection => numerical optimization problem. | ||

| + | |||

| + | |||

| + | '''When to stop splitting ?''' | ||

| + | |||

| + | Key: look for balance. | ||

| + | |||

| + | [[Image:Balance_Old Kiwi.jpg]] | ||

| + | |||

| + | Need to construct a "balanced tree". Many ways to do this. | ||

| + | |||

| + | |||

| + | ''Example:'' | ||

| + | |||

| + | 1. Validation - train with 80% of training data, validate on 20%. Continue splitting until validation error is minimized. | ||

| + | |||

| + | 2. Thresholding - stop splitting when threshold <math>\beta</math> is small (but not too small) | ||

| + | |||

| + | <math>\beta=0.03</math> | ||

| + | |||

| + | |||

| + | '''Warning: "Horizon Effect"''' | ||

| + | |||

| + | Lack of looking ahead may cause us to stop splitting prematurely. | ||

| + | |||

| + | You should keep splitting for a bit, after you meet stopping criteria. | ||

| + | |||

| + | |||

| + | '''How to correct oversplitting?''' | ||

| + | |||

| + | Use "pruning" (or inverse splitting) which implies that take 2 leaves that have a common parent and merge them if "merging helps" i.e. | ||

| + | |||

| + | - if I either doesn't change or only increases a little bit. | ||

| + | |||

| + | - if validation error either stays the same or decreases. | ||

| + | |||

| + | Declare parent to be a leaf. | ||

| + | |||

| + | |||

| + | '''Pruning increases generalization.''' | ||

| + | |||

| + | |||

| + | '''Idea''': Look further than horizon but step back if it is not worth it. | ||

| + | |||

| + | [[Image:ECE662_lect21_stopping.jpg _Old Kiwi]] | ||

| + | |||

| + | Here is an example where increasing the number of nodes typically lowers the impurity. If the stopping condition looks at at a short horizon, then the number of nodes may stop at the first stopping point. If the horizon continues, then the number of nodes can stop at the second stopping point, which appears to be the best location for this example. | ||

| + | |||

| + | [[Category:Lecture Notes]] | ||

Latest revision as of 08:42, 17 January 2013

Lecture 21, ECE662: Decision Theory

Lecture notes for ECE662 Spring 2008, Prof. Boutin.

Other lectures: 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28,

When the number of categories, c is big, decision tress are particularly good.

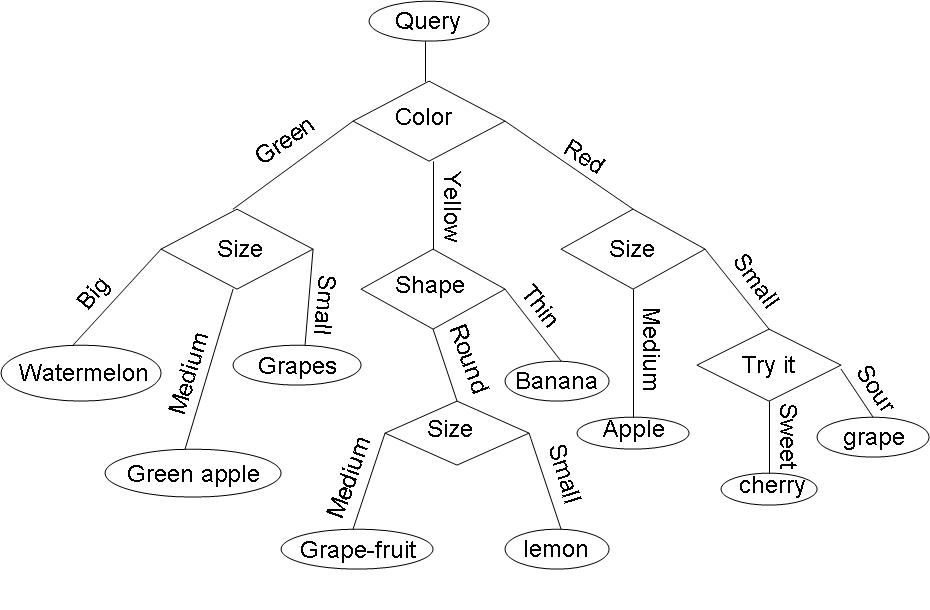

Example: Consider the query "Identify the fruit" from a set of c=7 categories {watermelon, apple, grape, lemon, grapefruit, banana, cherry} .

One possible decision tree based on simple queries is the following:

Three crucial questions to answer

How do you grow or construct a decision tree using training data?

CART Methodology - Classification and Regressive Tree

For constructing a decision tree, for a given classification problem, we have to answer these three questions

1) Which question should be asked at a given node -"Query Selection"

2) When should we stop asking questions and declare the node to be a leaf -"When should we stop splitting"

3) Once a node is decided to be a leaf, what category should be assigned to this leaf -"Leaf classification"

We shall discuss questions 1 and 2 (3 being very trivial)

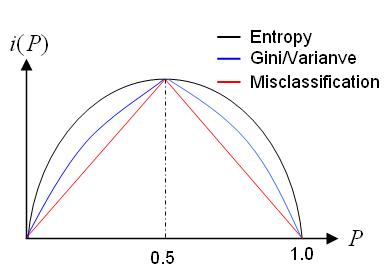

Need to define 'impurity' of a dataset such that $ impurity = 0 $ when all the training data belongs to one class.

Impurity is large when the training data contain equal percentages of each class

$ P(\omega _i) = \frac{1}{C} $; for all $ i $

Let $ I $ denote the impurity. Impurity can be defined in the following ways:

Entropy Impurity:

$ I = \sum_{j}P(\omega _j)\log_2P(\omega _j) $, when priors are known, else approximate $ P(\omega _j) $ by $ P(\omega _j) = \frac{\#\,of\,training\,patterns\,in\,\omega_j}{Total\,\#\,of\,training\,patterns} $

Gini Impurity

$ I = \sum_{i\ne j}P(\omega _i)P(\omega _j) = \frac{1}{2}[1- \sum_{j}P^2(\omega _j) $

Ex: when C = 2, $ I = P(\omega _1)P(\omega _2) $

Misclassification Impurity

$ I = 1-max P(\omega _j) $

defined as the "minimum probability that a training pattern is misclassified"

The following figure shows above-mentioned impurity functions for a two-category case, as a function of the probability of one of the categories.(DHS-399p)

Now let us look at each of the three questions in detail.

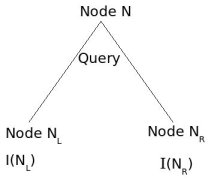

Query Selection

Heuristically, want impurity to decrease from one node to its children.

We assume that several training patterns are available at node N and they have a good mix of all different classes.

I(N) := impurity at node N.

Define impurity drop at node N as: $ \triangle I=I(N)-P_{L}I(N_{L})-(1-P_{L})I(N_{R}) $

where $ P_{L} $ and $ (1-P_{L}) $ are estimated with training patterns at node N.

A query that miximizes $ \triangle I $ is "probably" a good one. But "finding the query that maximizes" is not a well defined question because we are not doing an exhaustive search over all the possible queries. Rather we narrow down to a set of few queries and find among them, which one maximizes $ \triangle I $.

Example:

1. look at separation hyperplane that miximizes $ \triangle I(N) $

2. look for a single feature threshold (colour or shape or taste) which would maximize $ \triangle I(N) $

Query selection => numerical optimization problem.

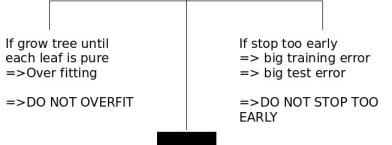

When to stop splitting ?

Key: look for balance.

Need to construct a "balanced tree". Many ways to do this.

Example:

1. Validation - train with 80% of training data, validate on 20%. Continue splitting until validation error is minimized.

2. Thresholding - stop splitting when threshold $ \beta $ is small (but not too small)

$ \beta=0.03 $

Warning: "Horizon Effect"

Lack of looking ahead may cause us to stop splitting prematurely.

You should keep splitting for a bit, after you meet stopping criteria.

How to correct oversplitting?

Use "pruning" (or inverse splitting) which implies that take 2 leaves that have a common parent and merge them if "merging helps" i.e.

- if I either doesn't change or only increases a little bit.

- if validation error either stays the same or decreases.

Declare parent to be a leaf.

Pruning increases generalization.

Idea: Look further than horizon but step back if it is not worth it.

File:ECE662 lect21 stopping.jpg Old Kiwi

Here is an example where increasing the number of nodes typically lowers the impurity. If the stopping condition looks at at a short horizon, then the number of nodes may stop at the first stopping point. If the horizon continues, then the number of nodes can stop at the second stopping point, which appears to be the best location for this example.