Parzen Window Density Estimation

A slecture by ECE student Ben Foster

Partly based on the ECE662 Spring 2014 lecture material of Prof. Mireille Boutin.

Contents

[hide]Coverage

- Brief introduction to non-parametric density estimation, specifically Parzen windowing

- Brief introduction to the theory that Parzen windowing is based on

- Visualizations of Parzen windows and a discussion of the strengths and weaknesses of the Parzen window method as a density estimation technique

Introduction/Motivation

So far in our study of pattern recognition and classification we have primarily focused on the use of discriminant functions as a means of classifying data. That is, for a set of classes $ \omega_c $, we choose to classify a sample $ \vec{X}_i $ to a class c if that class is most probable given what we know about the sample.

The phrase ``most probable" implies that we are dealing with probability distributions defined in the normal way, which is correct. As one might guess, the probability distributions that are used to map samples to classes are not always of immediately obvious character and/or easy to obtain. Maximum likelihood estimation (MLE) and Bayesian parameter estimation are fairly broad categories of methodologies that attempt to estimate the parameters of the underlying distributions of data, and the expectation-maximization (EM) algorithm is an oft-used particular method of estimating these parameters.

However, not all density-estimation methods are dependent on having prior knowledge of the distributions of the data to be classified. ``Non-parametric" methods eschew assumptions about the distribution of data to varying degrees, thus circumventing some of the issues associated with more Bayesian methods. Though there are a number of non-parametric density-estimation methods that are widely employed, this lecture will focus on one of the most popular: Parzen window estimation. The following survey of the method will hopefully shed some light on the pros and cons of the Parzen window method individually. Additionally, a direct application of Parzen window estimation to a classification problem will be briefly discussed.

Parzen Windows

- Convergence to $ p(\vec{X}) $ - Are we actually constructing a p.d.f.?

The motivation for choosing a non-parametric method for estimating a density function is largely derived from a lack of prior information about the density function that corresponds to an experimenter's data. Without a substantial amount of information about the distribution of data (and conditional distributions of data belonging to each class) it is near impossible to do parametric density estimation, namely maximum likelihood estimation (MLE) and Bayesian parameter estimation (BPE). Recall that for MLE, the estimated parameter vector $ \hat{\theta} $ corresponds to the value of $ \vec{\theta} $ that maximizes the likelihood function, i.e.:

Also recall that BPE involves using Bayes' rule to obtain the conditional distribution of the parameter vector $ \theta $ given the data $ \hat{X} $:

Clearly, both of these methods depend strongly on knowledge of the conditional distributions of the data $ \vec{X} $ which, again, is not always readily available. Additionally it is not necessarily true that the true distribution of a given dataset is described well by one of the commonly-used p.d.f.'s.

In both cases, it seems that one could be well-served to try to construct a p.d.f. based on the data at hand to circumvent these issues. If we do not already have an idea of the p.d.f. that describes the data, why can't do things backwards and use the data to construct a p.d.f.? However it is not immediately obvious that such a procedure gives a robust result (or is even possible).

It turns out that if we are careful in our definition, we can in fact construct such a p.d.f.. Chapter 4, Section 2 of Duda, Hart, and Stork's Pattern Classification [1] provides such a definition which is the basis for the following analysis. We start by observing very generally that the probability P that a vector $ \vec{X} $ will lie in a region $ \mathcal{R} $ is given by

If we now draw n samples X$ _1 $,...,X$ _n $, we can express the probability that k of these n fall in $ \mathcal{R} $ with the binomial law:

We also know that for the binomial law the expected value for k is equal to the number of samples drawn multiplied by the probability of success, i.e.:

which is easily rearranged into

We assume that the above is a good estimate for P since the binomial distribution is know to peak strongly near its mean. Now comes the key assumption for our derivation. Let us say that the region $ \mathcal{R} $ is very small, so small that the function p does not vary within it in a significant way. Now we can define the voxel V as the volume enclosed by $ \mathcal{R} $ which, along with our assumption about $ \mathcal{R} $'s size, allows us to write

Now that we have the above expression in hand and know that P is equal to k/n, we can write our desired result:

It is important to note that the above equation is an approximation. It is very difficult to establish true equality in the limit as V goes to zero. Since we would like to think of this density estimation practically, imagine that we have a finite number of samples n and take V to zero. Then there is an incredibly small chance that one of the samples actually falls within V, meaning that our estimate of $ p(\vec{X}) $ goes to zero. Even if a sample does lie within V, it is easy to see that in such a case $ p(\vec{X}) $ goes to infinity as V goes to zero. Neither of these cases is helpful in our search for the true $ p(\vec{X}) $.

If we allow the number of samples n to go to infinity, however, we are able to establish convergence of the previous equation to equality. Let us rewrite the previous equation (with equality instead of approximation) as a sequence that changes with n:

This sequence only converges to $ p(\vec{X}) $ if three conditions are met:

- $ \underset{n\to\infty}{\lim}V_n = 0 $

- $ \underset{n\to\infty}{\lim} k_n = \infty $

- $ \underset{n\to\infty}{\lim} k_n/n = 0 $

We have already established that we need condition 1 to be true if we would like to establish true equality in our most recent equation. This condition is necessary so that we are in fact finding the exact value of $ p(\vec{X}) $ (not the average value over some space with finite volume). The second condition is needed so that $ \frac{k_n}{n} $ converges to P, and the third condition ensures that the total number of samples k that lie in $ \mathcal{R} $ are an infinitesimal fraction of the total number of samples n. One way of making sure that these three conditions are satisfied is by defining V in terms of n in such a way that V$ _n $ shrinks as n grows. One popular function that satisfies this is $ V_n = \frac{1}{\sqrt{n}} $; this function helps to form the foundation of the Parzen window method that is popular for non-parametric density estimation.

- Density Estimation - What does our window function look like? What does our p.d.f. look like?

Now that we have established that we can design a function that converges to the true p.d.f. of our data given a high enough number of samples (n), we would like to flesh out the bare-bones results of our analysis into a more practical form. As per the treatment in [1], let us claim that V$ _n $ is the volume corresponding to a hypercube with edge length h$ _n $. Now we can define a window function $ \varphi(\vec{X}) $ such that

and

It should be clear that $ \varphi(\vec{X}) $ is a density function that has the same dimensionality as $ \vec{X} $.

It is also helpful if we define our volume V$ _n $ in terms of the number of dimensions d that our density function $ \varphi(\vec{X}) $ has. This parameterization has the form

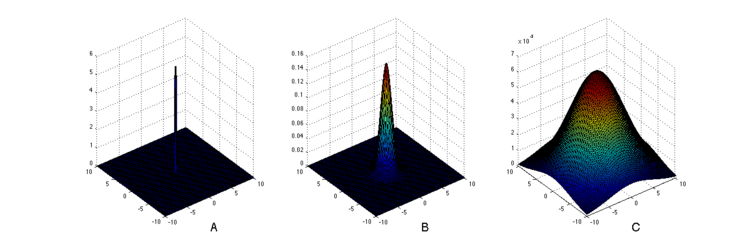

where h$ _n $ is the length of a side of the hypercube that has volume V$ _n $, and d is the number of dimensions of our density function $ \varphi(\vec{X}) $. h$ _n $ is often referred to as the window width; this intuitive meaning is illustrated in Figure [1]. Note that V$ _n $ does not necessarily need to be enclosed by a hypercube, but can instead be enclosed by a hypervolume that does not have equally sized sides. However, $ \varphi(\vec{X}) $ is usually chosen to be equally scaled in all dimensions for the sake of simplicity.

Now comes the distinctive feature of the Parzen window technique. Let us say that, out of our n samples, k of the samples fall within our volume V$ _n $ centered at $ \vec{X} $. This number k$ _n $ can be expressed as

where $ \vec{X}_i $ describes the coordinates of the ith sample. We can then make the simple substitution of the above equation into the equation describing p$ _n $:

The above formula is the one most commonly associated with the Parzen windowing method. It essentially says that the estimated p.d.f. is made up of a sum of window functions, and is an actual density itself provided that $ \varphi(\vec{X}) $ is a density function and the values of the mean and variance of the estimated p.d.f. converge. That is, $ p_n(\vec{X})\xrightarrow{n\rightarrow\infty}p(\vec{X}) $. Please refer to Duda, Hart, and Stork, Chapter 4, p. 8-10 [1] for an involved but excellent derivation of these convergence properties.

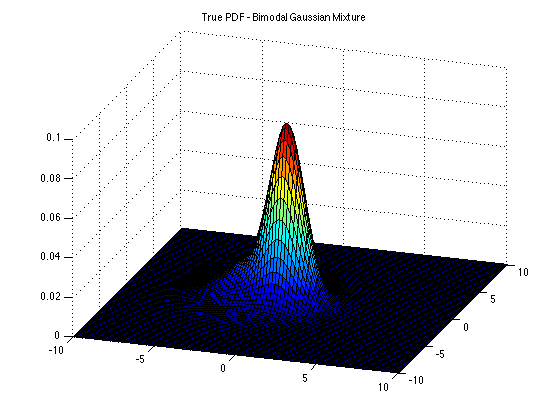

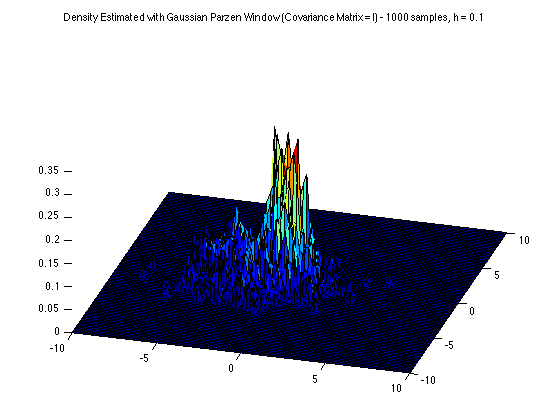

As mentioned above, the window function $ \varphi(\vec{X}) $ can be chosen to be anything so long as it is a true density function. Some popular choices are a uniform p.d.f. on the interval equal to the length of h and the Gaussian p.d.f. with $ \mu = \vec{X}_i $ and $ \Sigma = \mathcal{I} $. Below are a few figures that illustrate a) the nature of the window function $ \varphi(\vec{X}) $ and how it changes as h changes and b) the nature of a Parzen window-estimated density function and how it differs from the true density function from which data is drawn.

Discussion/Conclusion

Note in the figures above how much influence the h parameter has on the fidelity of the estimated p.d.f. to the true p.d.f. from which the samples used to estimate the p.d.f. are drawn. In practice, this is a major drawback of the Parzen windowing method, as there are not truly robust ways to determine the h parameter if one does not have some prior information about what the underlying p.d.f. to be estimated looks like. Note the similarities between Figures [3] and [4]. How is one supposed to determine which of these two is more likely to represent the underlying p.d.f.? No looking at Figure [2] - that's cheating! Clearly Figure [5] uses an h parameter that is too sensitive to the data, and looks very noisy as a result. However, the phrase "too noisy" is in relation to the information that we already know about the underlying p.d.f. (Figure 2). It is easy to imaging that it would be very difficult to classify a Parzen window-estimated distribution as "too noisy" if the number of dimensions of the distribution was fairly large or at least large enough to keep us from easily visualizing the estimated p.d.f.. In summary, the problem of choosing the h parameter is not a trivial one.

Another disadvantage of the Parzen window method is its fairly prohibitive computational load. For example, if the window function $ \varphi(\vec{X}) $ is defined to be a d-dimensional Gaussian, it is technically defined on all d-space. In practice it is not necessary (or possible) to define a function on all d-space, but in order to be a reasonable approximation to $ \varphi(\vec{X}) $ the function that we define for our computations should be defined on a reasonable subset of the space. The time to define $ \varphi(\vec{X}) $ grows exponentially with d, which is obviously a pretty substantial computational load. Factor in the number of data points that one uses to build the estimated p.d.f. with, and the computation time for the Parzen window method can quickly become untenable.

In short, though the ability to effectively cast aside the issue of parameter estimation can be a compelling reason to employ the Parzen window method for density estimation, it is difficult to tout the method too highly in light of its heavy computation time and the difficulty associated with choosing an appropriate h-parameter.

References

[1] R.O. Duda, P.E. Hart, and D.G. Stork: Pattern Classification, Wiley, New York, NY, 2001, Chapter 4, p. 2-18

[2] K. Fukunaga, Introduction to Statistical Pattern Recognition, Academic Press, Boston, MA, 1990, p. 254-268

[3] Mireille Boutin, ECE662: Statistical Pattern Recognition and Decision Making Processes, Purdue University, Spring 2014

Questions and comments

If you have any questions, comments, etc. please post them on this page.