(→Lecture Objective) |

|||

| Line 7: | Line 7: | ||

* Linear Discriminant Functions | * Linear Discriminant Functions | ||

| − | [[Comparison of MLE and Bayesian Parameter Estimation_OldKiwi]] | + | == Maximum Likelihood Estimation and Bayesian Parameter Estimation == |

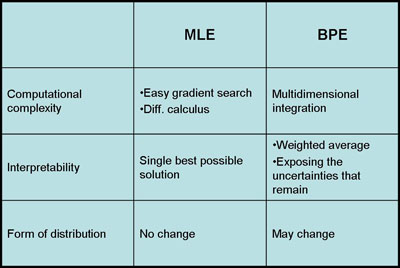

| + | [[Image:MLEvBPE comparison_OldKiwi.jpg]] | ||

| + | |||

| + | If the prior distributions have some specific properties than the Bayesian Parameter Estimation and Maximum Likelihood Estimation are asymptotically equivalent for infinite training data. However, large training datasets are typically unavailable in pattern recognition problems. Furthermore, when the dimension of data increases, the complexity of estimating the parameters increases exponentially. In that aspect, BPE and MLE have some advantages over each other. | ||

| + | |||

| + | *Computational Complexity: | ||

| + | MLE has a better performance than BPE for large data, because MLE simply uses gradient or differential calculus to estimate the parameters. On the other hand, BPE uses high dimensional integration and it's computationally expensive. | ||

| + | |||

| + | *Interpretability: | ||

| + | The results of MLE are easier to interpret because it generates a single model to fit the data as much as possible. However, BPE generates a weighted sum of models which is harder to understand. | ||

| + | |||

| + | *Form of Distribution: | ||

| + | The advantage of using BPE is that it uses prior information that we have about the model and it gives us a possibility to sequentially update the model. If the information we have on the model is reliable than BPE is better than MLE. On the other hand, if prior model is uniformly distributed than BPE is not so different than MLE. Indeed, MLE is a special and simplistic form of BPE. | ||

| + | |||

| + | See also: [[Comparison of MLE and Bayesian Parameter Estimation_OldKiwi]] | ||

| + | |||

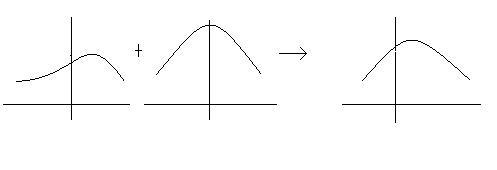

| + | Figure for BPE processes: | ||

| + | [[Image:BPEprocess_OldKiwi.jpg]] | ||

Revision as of 12:45, 17 March 2008

Lecture Objective

- Maximum Likelihood Estimation and Bayesian Parameter Estimation

- Linear Discriminant Functions

Maximum Likelihood Estimation and Bayesian Parameter Estimation

If the prior distributions have some specific properties than the Bayesian Parameter Estimation and Maximum Likelihood Estimation are asymptotically equivalent for infinite training data. However, large training datasets are typically unavailable in pattern recognition problems. Furthermore, when the dimension of data increases, the complexity of estimating the parameters increases exponentially. In that aspect, BPE and MLE have some advantages over each other.

- Computational Complexity:

MLE has a better performance than BPE for large data, because MLE simply uses gradient or differential calculus to estimate the parameters. On the other hand, BPE uses high dimensional integration and it's computationally expensive.

- Interpretability:

The results of MLE are easier to interpret because it generates a single model to fit the data as much as possible. However, BPE generates a weighted sum of models which is harder to understand.

- Form of Distribution:

The advantage of using BPE is that it uses prior information that we have about the model and it gives us a possibility to sequentially update the model. If the information we have on the model is reliable than BPE is better than MLE. On the other hand, if prior model is uniformly distributed than BPE is not so different than MLE. Indeed, MLE is a special and simplistic form of BPE.

See also: Comparison of MLE and Bayesian Parameter Estimation_OldKiwi