| Line 3: | Line 3: | ||

Ex. | Ex. | ||

| − | [[Image:Lecture22_DecisionTree_OldKiwi.JPG]] | + | [[Image:Lecture22_DecisionTree_OldKiwi.JPG]] Figure 1 |

To assign category to a leaf node. | To assign category to a leaf node. | ||

| Line 42: | Line 42: | ||

Synonymons="unsupervised learning" | Synonymons="unsupervised learning" | ||

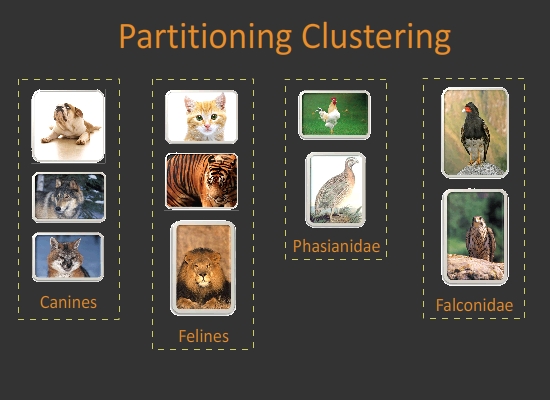

| − | [[Image:PartitionCluster_OldKiwi.jpg]] | + | [[Image:PartitionCluster_OldKiwi.jpg]] Figure 2 |

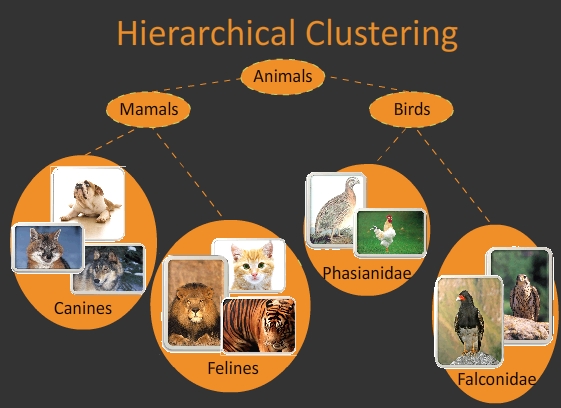

| − | [[Image:HierachichalCluster_OldKiwi.jpg]] | + | [[Image:HierachichalCluster_OldKiwi.jpg]] Figure 3 |

==Clustering as a useful technique for searching in databases== | ==Clustering as a useful technique for searching in databases== | ||

| Line 54: | Line 54: | ||

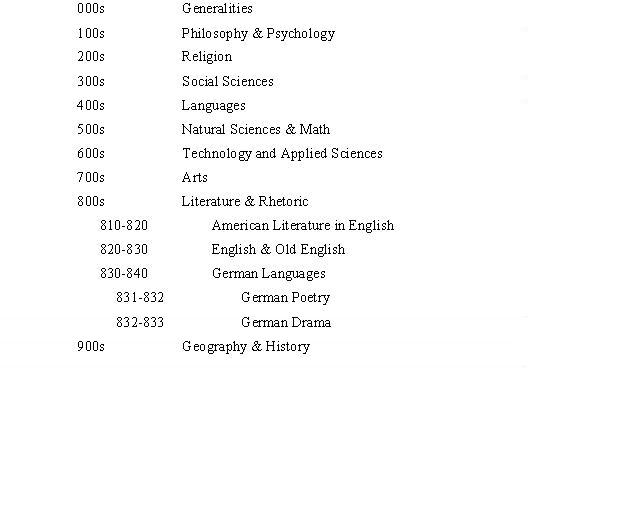

* Example: Dewey system to index books in a library | * Example: Dewey system to index books in a library | ||

| − | [[Image:Dewey_OldKiwi.jpg]] | + | [[Image:Dewey_OldKiwi.jpg]] Figure 4 |

* Example of Index: Face Recognition | * Example of Index: Face Recognition | ||

| Line 64: | Line 64: | ||

- Search will be faster because of <math>\bigtriangleup</math> inequality. | - Search will be faster because of <math>\bigtriangleup</math> inequality. | ||

| − | [[Image:Lec22_hiercluster_OldKiwi.PNG]] | + | [[Image:Lec22_hiercluster_OldKiwi.PNG]] Figure 5 |

* Example: Image segmentation is a clustering problem | * Example: Image segmentation is a clustering problem | ||

- dataset = pixels in image | - dataset = pixels in image | ||

| + | |||

- each cluster is an object in image | - each cluster is an object in image | ||

| − | [[Image:Lec22_housecluster_OldKiwi.PNG]] | + | [[Image:Lec22_housecluster_OldKiwi.PNG]] Figure 6 |

Input to a clustering algorithm is either | Input to a clustering algorithm is either | ||

| + | |||

- distances between each pairs of objects in dataset | - distances between each pairs of objects in dataset | ||

| + | |||

- feature vectors for each object in dataset | - feature vectors for each object in dataset | ||

Revision as of 17:21, 6 April 2008

Note: Most tree growing methods favor greatest impurity reduction near the root node.

Ex.

To assign category to a leaf node.

Easy!

If sample data is pure

-> assign this class to leaf.

else

-> assign the most frequent class.

Note: Problem of building decision tree is "ill-conditioned"

i.e. small variance in the training data can yield large variations in decision rules obtained.

Ex. p.405(D&H)

A small move of one sample data can change the decision rules a lot.

Reference about clustering

"Data clustering, a review," A.K. Jain, M.N. Murty, P.J. Flynn[1]

"Algorithms for clustering data," A.K. Jain, R.C. Dibes[2]

"Support vector clustering," Ben-Hur, Horn, Siegelmann, Vapnik [3]

"Dynamic cluster formation using level set methods," Yip, Ding, Chan[4]

What is clustering?

The task of finding "natural " groupings in a data set.

Synonymons="unsupervised learning"

Clustering as a useful technique for searching in databases

Clustering can be used to construct an index for a large dataset to be searched quickly.

- Definition: An index is a data structure that enables sub-linear time look up.

- Example: Dewey system to index books in a library

- Example of Index: Face Recognition

- need face images with label

- must cluster to obtain sub-linear search time

- Search will be faster because of $ \bigtriangleup $ inequality.

- Example: Image segmentation is a clustering problem

- dataset = pixels in image

- each cluster is an object in image

Input to a clustering algorithm is either

- distances between each pairs of objects in dataset

- feature vectors for each object in dataset