| Line 35: | Line 35: | ||

Note: you cannot have a continuous Y from a discrete X. | Note: you cannot have a continuous Y from a discrete X. | ||

| − | + | ||

| + | ---- | ||

| + | |||

| + | ==Case 1: X and Y Discrete == | ||

| + | |||

Let <math>R_X</math>≡ X(''S'') be the range space of X and math>R_Y</math>≡ g(X(''S'')) be the range space of Y. Then the pmf of Y is <br/> | Let <math>R_X</math>≡ X(''S'') be the range space of X and math>R_Y</math>≡ g(X(''S'')) be the range space of Y. Then the pmf of Y is <br/> | ||

<center>p<math>_Y</math>(y) = P(Y=y) = P(g(X)=y)</center> | <center>p<math>_Y</math>(y) = P(Y=y) = P(g(X)=y)</center> | ||

| Line 57: | Line 61: | ||

| + | ---- | ||

| + | |||

| + | ==Case 2: X Continuous, Y Discrete == | ||

| − | |||

The pmf of Y in this case is <br/> | The pmf of Y in this case is <br/> | ||

<center>p<math>_Y</math>(y) = P(g(X)=y) = P(X ∈ D<math>_Y</math>) =</center><br/> | <center>p<math>_Y</math>(y) = P(g(X)=y) = P(X ∈ D<math>_Y</math>) =</center><br/> | ||

| Line 77: | Line 83: | ||

| − | + | ---- | |

| + | |||

| + | ==Case 3: X and Y Continuous == | ||

We will discuss 2 methods for finding f<math>_Y</math> in this case. | We will discuss 2 methods for finding f<math>_Y</math> in this case. | ||

| Line 142: | Line 150: | ||

and <br/> | and <br/> | ||

<center><math>F_Y(y) = \int_{-\sqrt{y}}^{\sqrt{y}}f_X(x)dx</math></center> | <center><math>F_Y(y) = \int_{-\sqrt{y}}^{\sqrt{y}}f_X(x)dx</math></center> | ||

| + | |||

| + | |||

| + | '''Approach 2''' | ||

| + | |||

| + | Use a formula for f<math>_y</math> in terms of f<math>_X</math>. To derive the formula, assume the inverse function g<math>^{-1}</math> exists, so if y = g(x), then x = g<math>^{-1}</math>(y). Also assume g and g<math>^{-1}</math> are differentiable. Then, if Y = g(X), we have that <br/> | ||

| + | <center><math> f_Y(y) = \frac{f_X(g^{-1}(y))}{|\frac{dy}{dx}|_{x=g^{-1}(y)}}</math></center> | ||

| + | |||

| + | '''Proof:'''<br/> | ||

| + | First consider g monotone (strictly monotone) increasing | ||

| + | |||

| + | <center>[[Image:fig4_functions_on_rv.png|400px|thumb|left|Fig 4: Function g is strictly increasing on its domain.]]</center> | ||

| + | |||

| + | |||

| + | Since {y < Y ≤ y + Δy} = {x < X ≤ x + Δx}, we have that P(y < Y ≤ y + Δy) = P(x < X ≤ x + Δx). | ||

| + | |||

| + | Use the following approximations:<br/> | ||

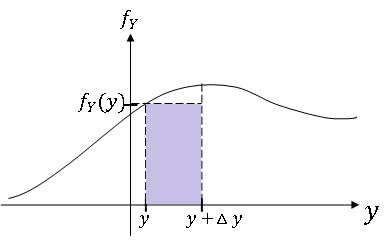

| + | *P(y < Y ≤ y + Δy) ≈ f<math>_Y</math>(y)Δy | ||

| + | *P(x < X ≤ x + Δx) ≈ f<math>_X</math>(x)Δx | ||

| + | |||

| + | <center>[[Image:fig5_functions_on_rv.png|400px|thumb|left|Fig 5: P(y < Y ≤ y + Δy) ≈ f<math>_Y</math>(y)Δy]]</center> | ||

| + | |||

| + | |||

| + | Since the left hand sides are equal, <br/> | ||

| + | <center><math>f_Y(y)\Delta y \approx f_X(x)\Delta x</math></center> | ||

| + | |||

| + | Now as Δy → 0, we also have that Δx → 0 since g is continuous, and the approximations above become equalities. We rename Δy, Δx as dy and dx respectively, so letting Δy → 0, we get<br/> | ||

| + | <center><math>\begin{align} | ||

| + | f_Y(y)dy &= f_X(x)dx \\ | ||

| + | \Rightarrow f_Y(y)&=f_X(x)\frac{dx}{dy} | ||

| + | \end{align}</math></center> | ||

| + | |||

| + | |||

| + | We normally write this as <br/> | ||

| + | <center><math> f_Y(y) = \frac{f_X(g^{-1}(y))}{\frac{dy}{dx}|_{x=g^{-1}(y)}}</math></center> | ||

| + | |||

| + | A similar derivation for g monotone decreasing gives us the general result for invertible g:<br/> | ||

| + | <center><math> f_Y(y) = \frac{f_X(g^{-1}(y))}{|\frac{dy}{dx}|_{x=g^{-1}(y)}}</math></center> | ||

| + | |||

| + | Note this result can be extended to the case where y = g(x) has n solutions x<math>_1</math>,...,x<math>_n</math>, in which case, <br/> | ||

| + | <center><math>f(Y(y) = \sum_{i=1}^n\frac{f_X(x_n)}{|\frac{dy}{dx}|_{x=x_n}}</math></center> | ||

| + | |||

| + | For example, if Y = X<math>^2</math>,<br/> | ||

| + | <center><math>x_1 = -\sqrt{y},\;\;x_2 = \sqrt{y}</math></center> | ||

| + | <center><math>\Rightarrow f_Y(y) = \frac{f_X(-\sqrt{y})}{2\sqrt{y}}+\frac{f_X(\sqrt{y})}{2\sqrt{y}}</math></center> | ||

| + | |||

| + | |||

| + | |||

| + | ---- | ||

| + | |||

| + | == References == | ||

| + | |||

| + | * [https://engineering.purdue.edu/~comerm/ M. Comer]. ECE 600. Class Lecture. [https://engineering.purdue.edu/~comerm/600 Random Variables and Signals]. Faculty of Electrical Engineering, Purdue University. Fall 2013. | ||

| + | |||

| + | |||

| + | ---- | ||

| + | |||

| + | [[ECE600_F13_notes_mhossain|Back to all ECE 600 notes]] | ||

Revision as of 12:54, 15 October 2013

Random Variables and Signals

Topic 8: Functions of Random Variables

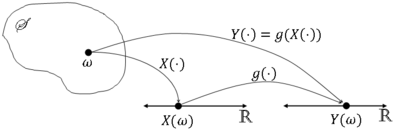

We often do not work with the random variable we observe directly, but with some function of that random variable. So, instead of working with a random variable X, we might instead have some random variable Y=g(X) for some function g:R → R.

In this case, we might model Y directly to bet f$ _Y $(y), especially if we do not know g. Or we might have a model for X and find f$ _Y $(y) (or p$ _Y $(y)) as a function of f$ _X $ (or p$ _X $ and g.

We will discuss the latter approach here.

More formally, let X be a random variable on (S,F,P) and consider a mapping g:R → R. Then let Y$ (\omega)= $g(X($ \omega)) $ ∀$ \omega $ ∈ S.

We normally write this as Y=g(X).

Graphically,

Is Y a random variable? We must have Y$ ^{-1} $(A) ≡ {$ \omega $ ∈ S: Y$ (\omega) $ ∈ A} = {$ \omega $ ∈ S: g(X$ (\omega) $) ∈ A} be an element of F ∀A ∈ B(R) (Y must be Borel measurable).

We will only consider functions g in this class for which Y$ ^{-1} $(A) ∈ F ∀A ∈ B(R), so that if Y=g(X) for some random variable X, Y will be a random variable.

What is the distribution of Y? Consider 3 cases:

- X discrete, Y discrete

- X continuous, Y discrete

- X continuous, Y continuous

Note: you cannot have a continuous Y from a discrete X.

Contents

Case 1: X and Y Discrete

Let $ R_X $≡ X(S) be the range space of X and math>R_Y</math>≡ g(X(S)) be the range space of Y. Then the pmf of Y is

But this means that

Example $ \quad $ Let X be the value rolled on a die and

Then R$ _X $ = {0,1,2,3,4,5,6} and R$ _Y $ = {0,1} and g(x) = x % 2.

Now

Case 2: X Continuous, Y Discrete

The pmf of Y in this case is

Then,

Example Let g(x) = u(x - x$ _0 $) for some x$ _0 $ ∈ R, and let Y=g(X). Then $ R_Y $ = {0,1} and

So,

Case 3: X and Y Continuous

We will discuss 2 methods for finding f$ _Y $ in this case.

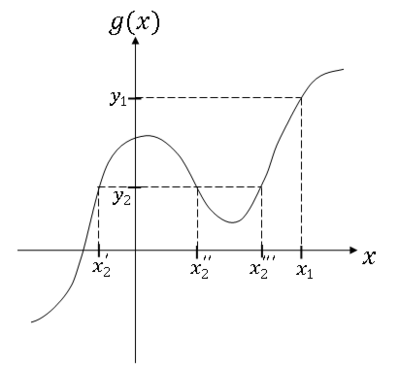

Approach 1

First, find the cdf F$ _Y $.

where D$ _y $ = {x ∈ R: g(x) ≤ y}.

Then

Differentiate F$ _Y $ to get f$ _y $.

You can find D$ _Y $ graphically or analytically

Example

For y = y$ _1 $ and y = y$ _2 $,

Then

Example Y = aX + b, a,b ∈ R, a ≠ 0

So,

Then

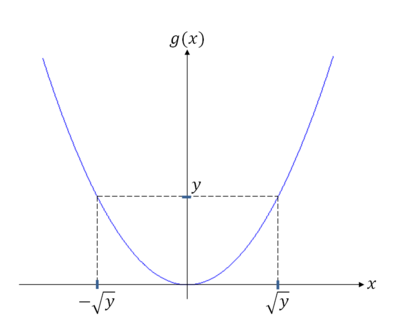

Example Y = X$ ^2 $

For y < 0, D$ _y $ = ø

For y ≥ 0,

So,

and

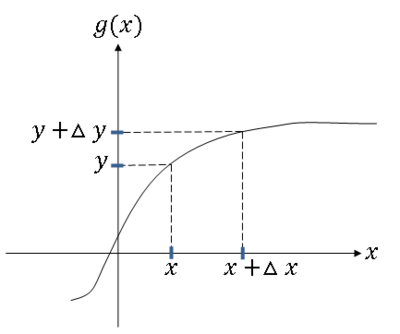

Approach 2

Use a formula for f$ _y $ in terms of f$ _X $. To derive the formula, assume the inverse function g$ ^{-1} $ exists, so if y = g(x), then x = g$ ^{-1} $(y). Also assume g and g$ ^{-1} $ are differentiable. Then, if Y = g(X), we have that

Proof:

First consider g monotone (strictly monotone) increasing

Since {y < Y ≤ y + Δy} = {x < X ≤ x + Δx}, we have that P(y < Y ≤ y + Δy) = P(x < X ≤ x + Δx).

Use the following approximations:

- P(y < Y ≤ y + Δy) ≈ f$ _Y $(y)Δy

- P(x < X ≤ x + Δx) ≈ f$ _X $(x)Δx

Since the left hand sides are equal,

Now as Δy → 0, we also have that Δx → 0 since g is continuous, and the approximations above become equalities. We rename Δy, Δx as dy and dx respectively, so letting Δy → 0, we get

We normally write this as

A similar derivation for g monotone decreasing gives us the general result for invertible g:

Note this result can be extended to the case where y = g(x) has n solutions x$ _1 $,...,x$ _n $, in which case,

For example, if Y = X$ ^2 $,

References

- M. Comer. ECE 600. Class Lecture. Random Variables and Signals. Faculty of Electrical Engineering, Purdue University. Fall 2013.