| Line 55: | Line 55: | ||

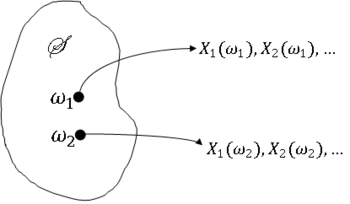

Since X<math>_n(\omega)</math> is generally a different sequence for very <math>\omega</math> ∈ ''S'', what does it mean for X<math>_n</math> to converge? We will discuss different ways in which X<math>_n</math> can converge. | Since X<math>_n(\omega)</math> is generally a different sequence for very <math>\omega</math> ∈ ''S'', what does it mean for X<math>_n</math> to converge? We will discuss different ways in which X<math>_n</math> can converge. | ||

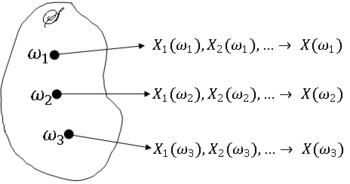

| − | '''Definition''' <math>\qquad</math> X<math>_n</math> '''converges everywhere''' if the sequence X<math>_1(\omega)</math>,X< | + | '''Definition''' <math>\qquad</math> X<math>_n</math> '''converges everywhere''' if the sequence X<math>_1(\omega)</math>,X<math>_2(\omega)</math>,... converges to some value X<math>(\omega)</math> ∀<math>\omega</math> ∈ ''S''. We also call this '''sure convergence''' or '''convergence surely'''. |

Revision as of 09:14, 22 November 2013

Random Variables and Signals

Topic 18: Stochastic Convergence

Stochastic Convergence

We will now consider infinite sequences of random variables. We will discuss what it means for such a sequence to converge. This will lead to some very important results: the laws of large numbers and the Central Limit Theorem.

Consider a sequence X$ _1 $,X$ _2 $,..., where each X$ _i $ is a random variable on (S,F,P). We will call this a random sequence (or a discrete-time random process).

Notation $ \qquad $ X$ _n $ may refer to either the sequence itself or to the nth element in the sequence. We may also use {X$ _n $} to denote the sequence, or X$ _n $, n ≥ 1.

The sequence X$ _n $ maps S to the set of all sequences of real numbers, so for a fixed S, X$ _1(\omega) $,X$ _2(\omega) $,... is a sequence of real numbers.

Before looking at convergence, recall the meaning of convergence or a sequence of real numbers.

Definition $ \qquad $ A sequence of real numbers x$ _1 $,x$ _2 $,... converges to a number x ∈ R if ∀$ \epsilon $ > 0, ∃ an n$ _{\epsilon} $ ∈ N such that

If there is such an x ∈ R, we say

or

For a random sequence X$ _n $, the issue of convergence is more complicated since X$ _n $ is a function of $ \omega $ ∈ S.

First look at a motivating example.

Example $ \qquad $ Let X$ _k $ = s + W$ _k $, where s ∈ R and W$ _k $ is a random sequence with E[W$ _k $] = 0 ∀k = 1,2,.... W$ _k $ can be viewed as a noise sequence if we want to know the value of s.

Let

Then, E[Y$ _n $] = s ∀n. But Y$ _n $ is a random variable, so we cannot expect Y$ _n $ = s ∀n. However, we intuitively expect Y$ _n $ to be a better estimate of s as n increases. Does Y$ _n $ → s as n → ∞ ? If so, in what sense?

Types of Convergence

Since X$ _n(\omega) $ is generally a different sequence for very $ \omega $ ∈ S, what does it mean for X$ _n $ to converge? We will discuss different ways in which X$ _n $ can converge.

Definition $ \qquad $ X$ _n $ converges everywhere if the sequence X$ _1(\omega) $,X$ _2(\omega) $,... converges to some value X$ (\omega) $ ∀$ \omega $ ∈ S. We also call this sure convergence or convergence surely.

Notation

Definition $ \qquad $ X$ _n $ converges almost everywhere or almost surely, if X$ _n(\omega) $ → for some A ∈ S with P(A) = 1. Also known as convergence with probability 1.

Notation

Definition $ \qquad $ X$ _n $ converges in mean square to X if

as

Notation

Definition X$ _n $ converges in probability to X if ∀$ \epsilon $ > 0

as

Note that P(|X$ _n $ - X| > $ \epsilon $) is a sequence of real numbers.

Notation

Definition $ \qquad $ X$ _n $ converges in distribution to X if

as

Note that for a fixed x ∈ R, F$ _n $(x) is a sequence of real numbers.

Notation

We will generally assume that if X$ _n $ converges in distribution, then

or

$ f_{X_n}(x)\rightarrow f_X(x) $

as

although this is not true in every instance, as seen in the next example.

Example $ \qquad $ Let X$ _n $ have pdf

Then

Now

as

$ n\rightarrow\infty $

where

However, f$ _{Xn} $ does not converge for x ∈ (0,1).

Cauchy Criterion for Convergence

We will now discuss a method