The Comer Lectures on Random Variables and Signals

Topic 17: Random Vectors

Contents

Random Vectors

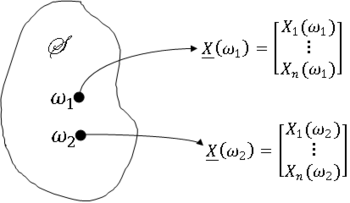

Definition $ \qquad $ let X$ _1 $,..., X$ _n $ be n random variables on (S,F,P). The column vector X is given by

is a random vector (RV) on (S,F,P).

We can view X($ \omega $) as a point in R$ ^n $ ∀$ \omega $ ∈ S.

Much of what we need to work with random vectors we can get by a simple extension of what we have developed for n = 2.

For example:

- The cumulative distribution function of X is

- and the probability density function of X is

- For any D ⊂ R$ ^n $ such that D ∈ B(R$ ^2 $),

- Note that B(R$ ^n $) is the $ \sigma $-field generated by the collection of all open n-dimensional hypercubes (more formally, k-cells) in R$ ^n $.

- The formula for the joint pdf of two functions of two random variables can be extended to find the pdf of n functions of n random variables (see Papoulis).

- The random variables X$ _1 $,..., X$ _n $ are statistically independent if the events {X$ _1 $ ∈ A$ _1 $},..., {X$ _n $ ∈ A$ _n $} are independent ∀A$ _1 $, ..., A$ _n $ ∈ B(R). An equivalent definition is that X$ _1 $,..., X$ _n $ are independent if

Random Vectors: Moments

We will spend some time on moments of random vectors. We will be especially interested in pairwise covariances/correlations.

The correlation between X$ _j $ and X$ _k $ is denoted R$ _{jk} $, so

and the covariance is C$ _{jk} $:

For a random vector X, we define the correlation matrix RX as

and the covariance matrix CX as

The mean vector $ \mu $X is

Note that the correlation matrix and the covariance matrix can be written as

Note that $ \mu $X, RX and CX are the moments we most commonly use for the random vectors.

We need to discuss an important property of RX, but first, a definition from Linear Algebra.

Definition $ \qquad $ An n × m matrix B with b$ _{ij} $ as its i,j$ ^{th} $ entry is non-negative definite (NND) (or positive semidefinite) if

for all real vectors [x$ _1 $,...,x$ _n $] ∈ R$ ^n $.

That is to say that for any real vector x, the product x$ ^T $Ax, where A is a real matrix, is non negative.

Theorem $ \qquad $ For any random vector X, RX is NND.

Proof: $ \qquad $ let a be an arbitrary real vector in R$ ^n $, and let

be a scalar random variable. Then

So

and thus, RX is NND

Note: CX is also NND.

Characteristic Functions of Random Vectors

Definition $ \qquad $ let X be a random vector on (S,F,P). Then the characteristic function of X is

where

The characteristic function ΦX is extremely useful for finding pdfs of sums of random variables.

Let

Then

If X$ _1 $,..., X$ _n $ are independent, then

If, in addition, X$ _1 $,..., X$ _n $ are identically distributed with common characteristic function Φ$ _X $, then

Gaussian Random Vectors

Definition $ \qquad $ Let X be a random vector on (S,F,P). Then X is Gaussian and X$ _1 $,..., X$ _n $ are said to be jointly Gaussian iff

is a Gaussian random variable ∀[a$ _0 $,..., a$ _n $] ∈ R$ ^{n+1} $.

Now we will show that the characteristic function of a Gaussian random vector X is

where $ \mu $X is the mean vector of X and CX is the covariance matrix.

Proof $ \qquad $ Let

for

Then Z is a Gaussian random variable since X is Gaussian. So

where

and

where

and CX is the covariance matrix of X.

Now

Plugging the expressions for $ \mu_Z $ and $ \sigma_Z $$ ^2 $ into Φ$ _Z $ gives

Note that we can use the equation

to show that if X is Gaussian, then

Note that if X$ _1 $,..., X$ _n $ are pairwise uncorrelated, then CX is the covariance matrix of X is diagonal and

Then, we can find the joint characteristic function and the joint pdf of the jointly Gaussian random variables X and Y using the forms for a Gaussian random vector with n = 2, X$ _! $ = X and X$ _2 $ = Y.

References

- M. Comer. ECE 600. Class Lecture. Random Variables and Signals. Faculty of Electrical Engineering, Purdue University. Fall 2013.

Questions and comments

If you have any questions, comments, etc. please post them on this page