| Line 146: | Line 146: | ||

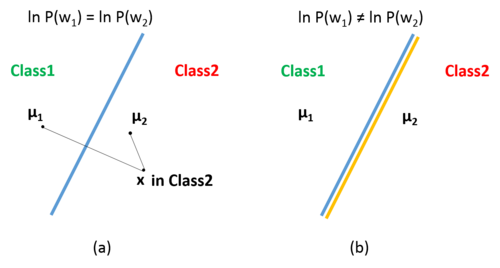

<center>[[Image:case1_1.png|500px|thumb|left|alt=Alt text|<font size= 2> '''Figure 1. Geometric Interpretation of Case 1: (a) two classes have the same prior probability; (b) two classes do not have the same prior probabilities.''' </font size>]] </center><br /> | <center>[[Image:case1_1.png|500px|thumb|left|alt=Alt text|<font size= 2> '''Figure 1. Geometric Interpretation of Case 1: (a) two classes have the same prior probability; (b) two classes do not have the same prior probabilities.''' </font size>]] </center><br /> | ||

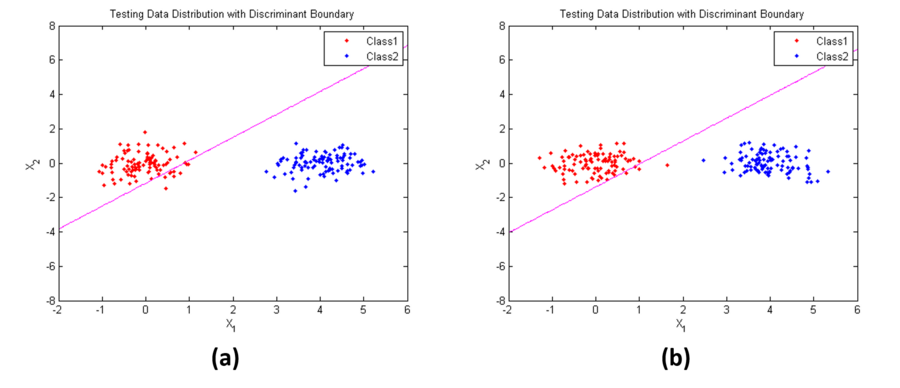

| − | Figure 2 shows the | + | Figure 2 shows an example of '''''Case I'''''. Parameters used here are: |

| + | |||

| + | <math>\mathbf{\mu_1}=[0,3]^t</math>, | ||

| + | |||

| + | <math>\mathbf{\mu_2}=[4,0]^t</math>, | ||

| + | |||

| + | <math>\mathbf{\Sigma}_1=\mathbf{\Sigma}_2=\begin{bmatrix} | ||

| + | 0.3 & 0\\ | ||

| + | 0 & 0.3 | ||

| + | \end{bmatrix}</math> | ||

| + | |||

| + | in (a), <math>P(w_1)=P(w_2)=0.5</math>; while in (b), <math>P(w_1)= 0.1, P(w_2)=0.9</math>. From the results, we find that when the prior probabilities are not the same, the position of the decision boundary is relatively insensitive (discriminant line is rotate in the counter clock direction a little bit). | ||

| + | |||

| + | <center>[[Image:case1_2.png|900px|thumb|left|alt=Alt text|<font size= 2> '''Figure 2. Example of Case 1: (a) two classes have the same prior probability; (b) Class2 has a higher prior probability.''' </font size>]] </center><br /> | ||

Revision as of 06:31, 28 April 2014

Discussion about Discriminant Functions for the Multivariate Normal Density

(partially based on Prof. Mireille Boutin's ECE 662 lecture)

Multivariate Normal Density

Because of the mathematical tractability as well as because of the central limit theorem, Multivariate Normal Density, as known as Gaussian Density, received more attention than other various density functions that have been investigated in pattern recognition.

The general multivariate normal density in d dimensions is:

$ p(\mathbf{x})=\frac{1}{(2\pi)^{d/2}| \mathbf{\Sigma} |^{1/2} }exp\begin{bmatrix} -\frac{1}{2} (\mathbf{x}-\mathbf{\mu})^t \mathbf{\Sigma} ^{-1}(\mathbf{x}-\mathbf{\mu}) \end{bmatrix}~~~~(1) $

where

$ \mathbf{x}=[x_1,x_2,\cdots ,x_d]^t $ is a d-component column vector,

$ \mathbf{\mu}=[\mu_1,\mu_2,\cdots ,\mu_d]^t $ is the d-component mean vector,

$ \mathbf{\Sigma}=\begin{bmatrix} \sigma_1^2 & \cdots & \sigma_{1d}^2\\ \vdots & \ddots & \\ \vdots \sigma_{d1}^2 & \cdots & \sigma_d^2 \end{bmatrix} $ is the d-by-d covariance matrix,

$ |\mathbf{\Sigma|} $ is its determinant,

$ \mathbf{\Sigma}^{-1} $ is its inverse.

Each $ p(x_i)\sim N(\mu_i,\sigma_i^2) $, so $ \Sigma $ plays role similar to the role that $ \sigma^2 $ plays in one dimension. Thus, we can find out the relationship among different features (such as $ x_i $ and $ x_j $)through $ \Sigma $:

- $ \sigma_{ij}=0 $, $ x_i $ and $ x_j $ are independent;

- $ \sigma_{ij}>0 $, $ x_i $ and $ x_j $ are positive correlated;

- $ \sigma_{ij}<0 $, $ x_i $ and $ x_j $ are negative correlated.

Discriminant Functions

One of the most useful method to represent pattern classifiers is in terms of a set of discriminant functions $ g_i(\mathbf{x}), i=1,2,...,c $. The classifier is said to assign a feature vector $ \mathbf{x} $ to class $ w_i $ if

$ g_i(\mathbf{x}) > g_j(\mathbf{x}), ~~~~~for~all~j \neq i $

Thus, the classification is viewed as process to compute the discriminant functions and select the category corresponding to the largest discriminant. A Bayes classifier is easily represented in this way. In order to simplify the classification process, minimum-error-rate classification is always chosen by taking $ g_i({\mathbf{x}}) $ in natural logarithm format:

$ g_i(\mathbf{x})=\ln p(\mathbf{x}|w_i)+\ln P(w_i)~~~~(2) $

Equation (2) can be readily evaluated if the densities $ p(\mathbf{x}|w_i) \sim N(\mathbf{\mu}_i, \mathbf{\Sigma}_i) $. From Equation (1), we have the following discriminant function for multivariate normal density:

$ g_i(\mathbf{x})=-\frac{1}{2}(\mathbf{x}-\mathbf{\mu}_i)^t \mathbf{\Sigma}_i^{-1}(\mathbf{x}-\mathbf{\mu}_i)-\frac{d}{2} \ln 2\pi -\frac{1}{2}\ln |\mathbf{\Sigma}_i|+\ln P(w_i)~~~~(3) $

we will use Equation (3) to discuss three special classification cases in the next part. To point out that, in next part, two classes two dimension features will be used in the discussion.

Classification for Three Special Cases

Case 1:

$ \mathbf{\Sigma}_i = \sigma^2 \mathbf{I} $

In this case, features $ x_1 $ $ x_2 $ are independent with different means and equal variances. That is,

$ \mathbf{\Sigma}_1 = \mathbf{\Sigma}_2 = \begin{bmatrix} \sigma^2 & 0\\ 0 & \sigma^2 \end{bmatrix} $

Thus, $ \mathbf{\Sigma}_i^{-1}=1/\sigma^2 $ and $ \mathbf{\Sigma}_i $ is a constant. So the discriminant function shown in Equation (3) can be simplified to Equation (4):

$ g_i(\mathbf{x})=-\frac{1}{2 \sigma^2}(\mathbf{x}-\mathbf{\mu}_i)^t (\mathbf{x}-\mathbf{\mu}_i)+\ln P(w_i)~~~~(4) $

Expand Equation (4), we obtain

$ g_i(\mathbf{x})=-\frac{1}{2 \sigma^2}(\mathbf{x}^t \mathbf{x} -\mathbf{\mu}_i^t \mathbf{x}- \mathbf{x}^t \mathbf{\mu}_i+ \mathbf{\mu}_i)^t \mathbf{\mu}_i)+\ln P(w_i)~~~~(5) $

Since $ \mathbf{x}^t \mathbf{x} $ is a constant for all classes, so we can expand Equation (5) continuously,

$ g_i(\mathbf{x})=-\frac{1}{2 \sigma^2}(-2 \mathbf{\mu}_i^t \mathbf{x}+\mathbf{\mu}_i^t \mathbf{\mu}_i+\ln P(w_i) = \frac{\mathbf{\mu}_i^t}{\sigma^2} \mathbf{x}+(-\frac{\mathbf{\mu}_i^t \mathbf{\mu}_i}{2 \sigma^2}+\ln P(w_i) ) ~~~~(6) $

Since $ \frac{\mathbf{\mu}_i^t}{\sigma^2} $ and $ -\frac{\mathbf{\mu}_i^t \mathbf{\mu}_i}{2 \sigma^2}+\ln P(w_i) $ are constant in $ \mathbf{x} $, so if we let $ w_i=\frac{\mathbf{\mu}_i^t}{\sigma^2} $, $ w_{i0}=-\frac{\mathbf{\mu}_i^t \mathbf{\mu}_i}{2 \sigma^2}+\ln P(w_i) $, we obtain

$ g_i(\mathbf{x})=w_i \mathbf{x}+w_{i0} ~~~~(7) $

Thus, from Equation (7), we conclude that in Case I, the classifier is a linear classifier.

Figure 1 shows the geometric interpretation of Case I: if the prior probabilities $ P(w_i) $ are the same for all classes, to classify a feature vector $ x $, we just should assign it to the category of the nearest mean, as shown in (a); if the prior probabilities $ P(w_i) $ are not the same for all classes, the decision boundary shifts away from the more likely mean.

Figure 2 shows an example of Case I. Parameters used here are:

$ \mathbf{\mu_1}=[0,3]^t $,

$ \mathbf{\mu_2}=[4,0]^t $,

$ \mathbf{\Sigma}_1=\mathbf{\Sigma}_2=\begin{bmatrix} 0.3 & 0\\ 0 & 0.3 \end{bmatrix} $

in (a), $ P(w_1)=P(w_2)=0.5 $; while in (b), $ P(w_1)= 0.1, P(w_2)=0.9 $. From the results, we find that when the prior probabilities are not the same, the position of the decision boundary is relatively insensitive (discriminant line is rotate in the counter clock direction a little bit).