Contents

[hide]MP3 Basics

Background

- MPEG (Moving Picture Experts Group) was established in 1988 by the ISO (International Standards Organization) and the IEC (International Electrotechnical Commission).

- In 1993, standards for audio compression were released. 3 layers of complexity were established, known as MPEG-1 Audio Layers 1, 2, and 3 respectively.

- Layers 1 and 2 were based on the MUSICAM technology. Layer 3, the most complex, was based on ASPEC (Adaptive Spectral Perceptual Entropy Coding). MPEG-1 Audio Layer 3 is now known simply as MP3.

- ASPEC was developed by the Fraunhofer Institute for Integrated Circuits, who with their partners Thompson Mulltimedia, still hold many patents for MP3 encoding and decoding technology.

Basic Information

Goal: To compress files as much as possible while retaining the same perceived audio quality as the original signal.

- Uses lossy audio coding, also known as perceptive coding, to take advantage of the imperfections in human perception. Also uses traditional compression techniques, such as Huffman Coding.

- MP3 is a standard. It suggests encoding methods and standardizes file formatting and decoding, but there are many different encoding schemes.

- Very popular form of compression for music, especially downloading over the internet, playing on portable music players, and storing large amounts of music.

- Other types of audio files, such as WAV, AAC, WMA, and OGG are also popular.

Perceptive Coding

There is much more information in a signal than the human ear and mind process. This extra information can be eliminated to make gains in compression. Also, sounds that are perceived, but not as well as other sounds, can be further compressed. A detailed model of human perception must be developed so only unnecessary information is eliminated or harshly compressed. Psychoacoustics, the study of how humans perceive sound, is used to do this.

Some facts about human perception that perceptive coding uses:

- Humans can only perceive sound between 20 Hz and 20 kHz.

- Our sensitivity to sound is different at different frequencies.

- Frequency masking: when a high energy signal is within a certain frequency range of a low energy signal, only the high energy signal will be perceived.

- Temporal masking: when two sounds are very close in time and one is much louder than the other, we only perceive the louder sound.

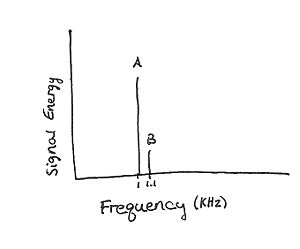

Figure 1 is an example of frequency masking. It shows the frequency spectrum of a signal composed of a sine wave with frequency 1 kHz added to a sine wave with frequency 1.1 kHz. The 1 kHz sine wave has a much higher amplitude, and therefore a much higher energy than the 1.1 kHz. In this example, only the 1 kHz wave will be perceived.

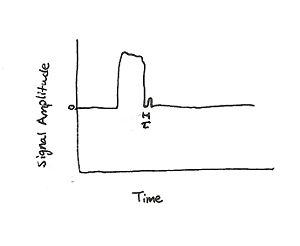

Figure 2 is an example of temporal masking. A high amplitude signal occurs very close in time to a small amplitude signal. If $ \tau $, the time difference between the signals, is small enough only the signal with the large amplitude will be perceived.

Fig 1: Frequency Masking Fig 2: Temporal Masking

Encoding

There are different encoding schemes used to achieve the MP3 format, but they have several things in common. Audio is sampled and is processed in frames, which are sets of a fixed number of samples. These frames are sent through a bank of filters which divide the original frequency spectrum of the signal into 32 sub-bands of equal size. The sub-bands are then processed separately but in parallel. The sub-bands are transformed into the frequency domain. A detailed model of human perception is compared to the frequency analysis of the frame to determine what can be eliminated, what can be heavily compressed, and what must be preserved in detail.

This information is sent to a non-uniform quantizer. Smaller step sizes are used when the largest component in a sub-band is small, and larger step sizes are used when a large component is present. Huffman coding is used to assign frequently used quantizing codes to shorter bit representations and infrequently used quantizing codes to longer bit representations. A buffer is used to eliminate pre-echo, hearing the sound before it occurs, on transients - signals that last a short time.

The audio signal is compressed and put into the standard form for MP3. This includes headers, which hold information used to encode and decode the frames, and the frames themselves. Each frame is sent with a header. The header includes a sync block, which allows the MP3 decoder to find the beginning of a valid frame. It also includes information on the type of MPEG used, the Layer, the sampling frequency, and other useful information for the decoder.

Two main types of encoding schemes are constant bit rate (CBR) and variable bit rate (VBR). CBR encoders must produce output at a constant bit rate, regardless of input. VBR encoders have a defined quality that must be met and adjust the bit rate accordingly. VBR can produce higher quality audio for a broader range of signals, but is more difficult to decode. Some decoders are better able to handle VBR than others, although to meet the ISO standard, they must be able to decode VBR signals.

Quality

The size of files and the quality is primarily determined by the bit rate. Bit rate is defined as the number of bits used to represent a second of sound. So 128 kbps means that 128,000 bits are used to represent 1 second of sound. Below are some examples of a jazz recording called Hot Swing by Kevin MacLeod at Royalty Free Music.

- Original uncompressed .wav file (converted from MP3), 5.38 MB (this file is 31 s long, not 21 s like the rest)

For Further Information

If you are interested in learning more about MP3, the following resources are a starting point.

- History of MP3

- Basic MP3 Information

- MP3 Basics

- Hacker, Scott. MP3: The Definitive Guide. Sebastopol, CA: O'Reilly & Associates, Inc., 2000. Print.

- Watkinson, John. An Introduction to Digital Audio. 2nd ed. Woburn, MA: Focal Press, 2002. Print.