In the K Nearest Neighbor (K-NN) technique, we need to store n samples of the training set. If n is very large, this storage will require a big memory. Moreover, the computation for the distances from a new sample to n training samples will be very huge. Editting technique is a way to reduce this memory and computation expenses.

The basic idea of this technique is to remove away from the training set any samples which contribute to the misclassification.

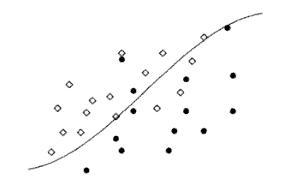

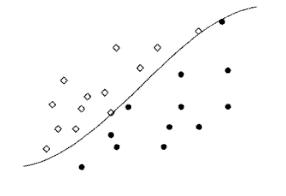

Figure 1 below shows the overlapping distributions of the training set of two classes along with their Bayes boundary. In the figure, in each region, we can observe some samples which are misclassified by Bayes rule. Removing these misclassfied sample will generate two homogeneous sets of samples with the a nearest neighbor decision boundary approximate the Bayes decision boundary (Fig. 2).

Figure 1: Two overlapping distributions along with the Bayes decision boundary

Figure 2: The distributions in Fig. 1 after removing misclassified samples

The followings are the algorithm of the editing technique for the K-NN rule:

1. Divide the training set $ R $ to $ N $ partitions: $ R_1,R_2,...,R_N $.

2. Classify the samples in the set $ R_i $ using K-NN with the union of the "next" $ M $ sets $ R_{\left( {i + 1} \right)\bmod N} \cup ... \cup R_{\left( {i + M - 1} \right)\bmod N} $ as the design set, for $ i=1,...,N $ where $ 1 \le M \le N - 1 $. Let $ S $ be the set of misclassified samples.

3. $ R=R-S $

4. Repeat to 1 until $ S $ is empty.

Reference: Andrew Webb, "Statistical Pattern Recognition" , 2nd, Wiley, 2001.